The ability to assess detection and discrimination of speech by infants has proved elusive. Dr Iain Jackson and colleagues discuss how new technologies and fresh approaches might offer valuable insight into young infants’ behavioural responses to sound.

The limits of current behavioural assessment in young infants Finding out whether an adult can hear something can often be as straightforward as simply asking them. Getting the same information from an infant is considerably less straightforward. However, obtaining information about an infant’s ability to detect and discriminate between sounds is crucial when making management decisions. This is especially important if we are to realise the benefits of newborn screening and early intervention.

Objective physiologic tests, such as otoacoustic emissions (OAE) and auditory brainstem response (ABR), have become the most popular assessments used in young infants to assess the peripheral auditory system. Cortical evoked potentials show promise, but require sophisticated technology, and lengthy recording times, making them unsuitable for widespread screening programmes. However, physiologic techniques in current use do not provide information to assess benefit of intervention. Behavioural responses are considered the ‘gold standard’ to assess perception of sound, and provide a measure of functioning of the entire auditory system. That said, obtaining reliable and resource-efficient behavioural responses in young infants is challenging for a number of reasons, including:

- Young infants’ behavioural responses are limited to higher sound intensities.

- Behavioural responses can be subtle and highly variable (both within and between individuals).

- Tests require expert examiners, which makes them expensive, and limits our ability to provide widespread screening.

- Manual procedures are slow, and infants habituate rapidly to sounds presented to them, meaning that many become too fatigued to complete the test.

The general consensus in clinical practice is that behavioural observations should not (or cannot) be relied upon until the infant reaches a developmental age of seven-to-nine months and head turns to sounds can be elicited and rewarded. Even then, test procedures can be time- and labour-intensive, and scoring is subject to bias.

“The ability to capture an increasing range of behaviours allows us to reconsider what responses are available to us for the tools we use.”

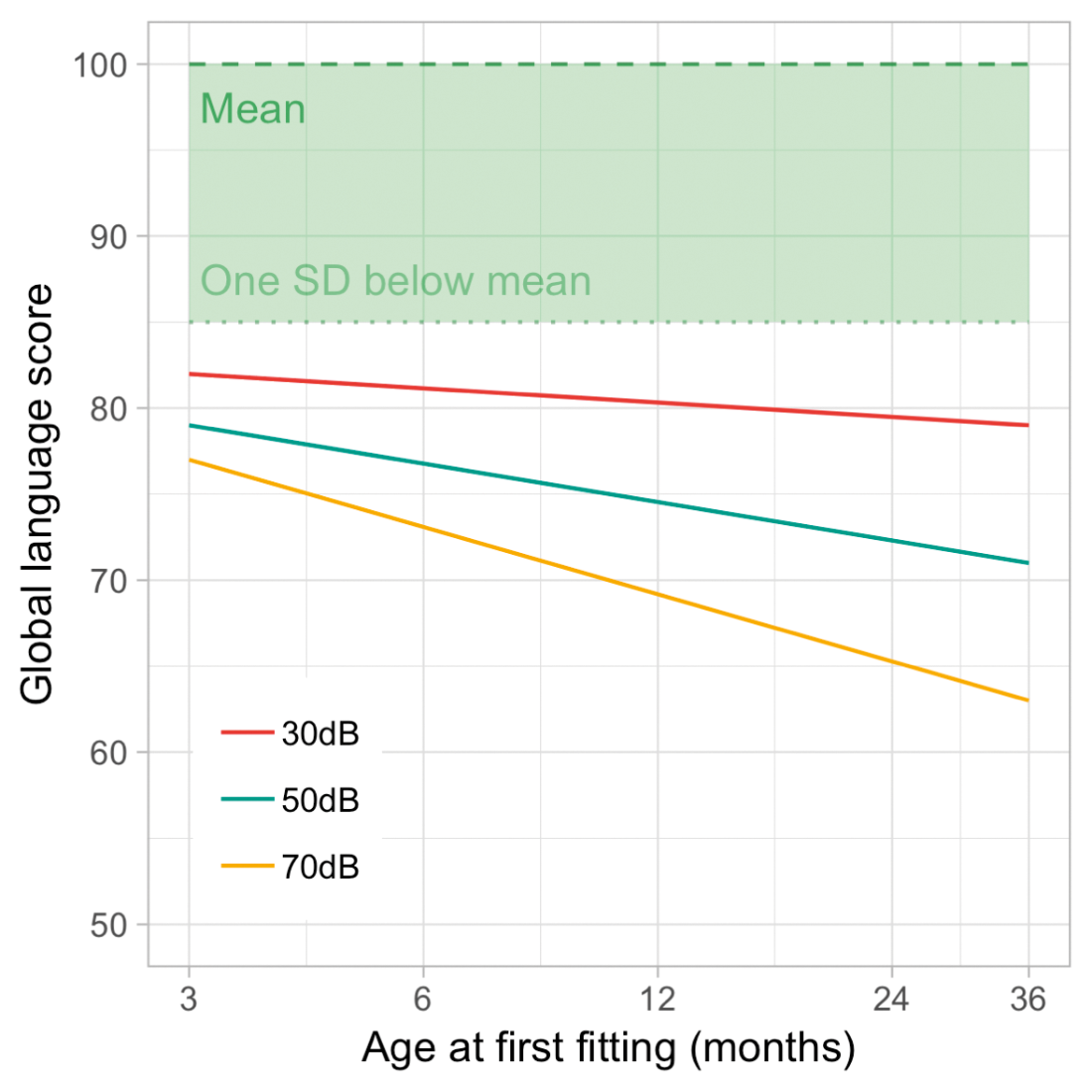

Figure 1. An illustration of the importance of early intervention; the effect of age at fitting of hearing aids on the language outcomes of children with different levels of hearing loss (Better-ear 4-frequency average). (Adapted with permission from Ching et al [1]; personal correspondence with Harvey Dillon.)

Technology opens the door to novel approaches

Fortunately, new technologies offer opportunities for cheaper, faster, and more reliable testing. An ever-increasing ability to capture different behaviours allows us to reconsider what responses are available for the tools we use. Outside of audiology, techniques such as eye tracking and pupillometry have been widely adopted in developmental psychology, and have transformed the variety and depth of questions infancy researchers are able to explore. At least two novel approaches have been found to work in efforts to measure hearing and vision efficiently and accurately in babies; (i) maximising engagement with a video display minimises attention lapses, and (ii) adopting a flexible, integrative approach to automation that simulates previous observer-based methods [2]. Elsewhere, video-based analyses now offer other surprising insights, which are often inaccessible to human observers. Recent work has shown, for example, that it is possible to detect subtle movements associated with babies’ breathing, or to detect heart-rate from imperceptible, pixel-level changes in the colour of faces [3].

In addition to impressive developments in the measurement of responses, modern computation techniques, such as machine learning, increasingly promise to offer insights and predictive value using healthcare data, including in the assessment of infants. For example, in a recent study, Goodfellow and colleagues placed wearable sensors on infants, and recorded their spontaneous movements over the course of a day [4]. Their machine learning algorithms were able to differentiate between the movements of typically developing infants and those at risk for developmental delays, potentially paving the way for a clinically useful tool for predictive assessment.

Applying new technologies to infant audiometry

As part of the NIHR Manchester Biomedical Research Centre, we are currently looking to combine automatic facial recognition, head tracking, and eye tracking, along with state-of-the-art machine learning algorithms, in order to be able to detect when an infant hears a sound.

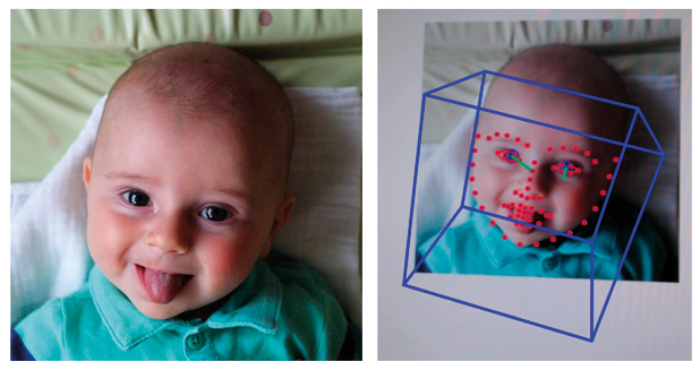

Infants excel at sending signals with their faces – all caregivers are acutely aware of how easy it is to recognise when an infant is unhappy! In addition to clear and obvious signals, like crying, there might also be other valuable markers we can capitalise on. Facial recognition software can detect and track a wide range of facial behaviours, from broad, global features such as head-turns, down to more subtle changes in individual features or areas of the face. For example, infants may raise their eyebrows ever so slightly when they hear a sound, or smile, or frown, or let their mouths fall open slightly. Whatever the response might be, if one or more responses occur frequently enough, it will provide a pattern for the algorithm to recognise, and thus provide a signal for researchers to measure.

Figure 2. Example of automatic face detection using OpenFace, capturing the overall head position (blue box) and facial features (red dots) of a four-month-old infant.

In this approach, we take video recordings of many infants’ faces and capture a range of examples of the spontaneous expressions and movements infants commonly make in the absence of sound. We also take video recordings of whatever their faces do when we play sounds. We can then use facial recognition software to map a number of points to the faces in each scenario. Finally, we can ask a computer to look for common patterns in the points, and, if patterns are found, whether they are different enough to tell the two scenarios apart.

“Success with our facial recognition approach would open up the possibility of remote, teleaudiology assessment of infants in their own homes, helping to reduce obstacles for hard-to-reach populations.”

If differences can be reliably detected between responses which occur when infants do or don’t hear sounds, or when they hear differences between sounds, then we can begin to use this approach to predict a wide range of clinically-relevant responses in individual infants, such as the detection of conversational speech, discrimination of speech sounds, identification of minimum response level, and so on. Such a system would provide hugely valuable insights into infants’ hearing very efficiently, cheaply, and quickly. It would require minimal specialist equipment, no equipment to be in contact with the infant, and could be conducted by a single researcher or clinician.

Where might improvements in technology lead us in the future?

In addition to the success of lab-based eye tracking paradigms, recent work suggests that infants’ attention can be measured by webcam video of their eye movements, and that even detailed eye tracking can be performed via an ordinary webcam [5,6]. Similar success with our facial recognition approach would open up the possibility of remote, teleaudiology assessment of infants in their own homes, helping to reduce obstacles for hard-to-reach populations and offering the flexibility that caregivers frequently require. Another possible development is that tests become increasingly tailored to individuals. Automation of the delivery of the test allows for real-time, response-contingent, selection and presentation of the stimuli we use to elicit responses. Content of stimuli could easily incorporate material directly relevant to each individual infant, such as images of caregivers’ faces or samples of caregivers’ speech, in order to maximise infant attention and the likelihood of a response.

The Royal Manchester Children’s Hospital offers specialist and tertiary services for children with hearing loss. It is the largest and busiest children’s hospital in the UK, as well as the UK’s largest audiology and otology centre.

“For many caregivers, it can be difficult to recognise hearing impairment in their baby, and to appreciate the effects hearing loss can have. Behavioural testing can be hugely important in helping to show the need for and benefit of treatment.”

Rachel Booth PhD MSc, Principal Clinical Scientist/Head of Paediatric Audiology.

“Behavioural assessment is crucial for clinical decisions about treatment, and intervention is delayed until measurement becomes feasible. Reliable behavioural assessment of younger infants would allow us to lower the age of intervention, helping to significantly improve outcomes in children’s language and cognitive development.”

Iain A Bruce MD FRCS (ORL-HNS), Consultant Paediatric Otolaryngologist/Honorary Clinical Professor of Paediatric Otolaryngology MAHSC, University of Manchester.

Acknowledgement:

This research is funded by the NIHR Manchester Biomedical Research Centre. The views expressed are those of the author(s) and not necessarily those of the NHS, the NIHR, or the Department of Health.

TAKE HOME MESSAGE

-

The benefits of newborn screening and early identification of hearing impairments are currently not fully realised due to the limitations of behavioural techniques.

-

New technology can offer novel insights into infants’ behavioural responses, including those beyond the natural limitations of human observers.

-

At the Manchester Centre for Audiology and Deafness (ManCAD; University of Manchester, UK) we are attempting to combine novel response measures and machine learning in order to determine when an infant hears a sound.

-

Approaches like ours, aided by advances in technology, have the potential to revolutionise infant hearing assessment in research labs and clinical practice by offering accessible, fast, inexpensive, and easy to use tools.

References

1. Ching TYC, Dillon H, Button L, et al. Age at Intervention for Permanent Hearing Loss and 5-Year Language Outcomes. Pediatrics 2017;140(3):e20164274.

2. Jones PR, Kalwarowsky S, Braddick, et al. Optimizing the rapid measurement of detection thresholds in infants. Journal of Vision 2015;15(11):2.

3. Durand F, Freeman WT, Rubinstein M. A World of Movement. Scientific American 2015;312(1):48-51.

4. Goodfellow D, Zhi R, Funke, R. Predicting Infant Motor Development Status using Day Long Movement Data from Wearable Sensors.

https://arxiv.org/pdf/1807.02617.pdf.

Last accessed October 2018.

5. Semmelmann K, Weigelt S. Online webcam-based eye tracking in cognitive science: A first look. Behavior Research Methods 2018;50(2):451-65.

6. Tran M, Cabral L, Patel R, Cusack R. Online recruitment and testing of infants with Mechanical Turk. Journal of Experimental Child Psychology 2017;156:168-78.