If you are over 30 years of age, you have witnessed a technology revolution that has grossly affected how we live: computers have come from being an oddity to an everyday feature in our households and places of work; the cellphone is ubiquitous; hardcopy letters by mail are rare as we can communicate instantaneously through email. And yet, what we have witnessed is only the beginning. Drs Hughes and Agrawal give us a glimpse of what is still to come, and what will be featured at the IFOS World Congress in Vancouver.

Machine learning in healthcare

Over the last five years there have been significant advances in high performance computing that have led to enormous scientific breakthroughs in the field of machine learning (a form of artificial intelligence), especially with regard to image processing and data analysis.

These breakthroughs now affect multiple aspects of our lives, from the way our phone sorts and recognises photographs, to automated translation and transcription services, and have the potential to revolutionise the practice of medicine.

The most promising form of artificial intelligence used in medical applications today is deep learning. Deep learning is a type of machine learning in which deep neural networks are trained to identify patterns in data [1]. A common form of neural network used in image processing is a convolutional neural network (CNN). Initially developed for general-purpose visual recognition, it has shown considerable promise in, for instance, the detection and classification of disease on medical imaging.

“Machine learning algorithms have also been central to the development of multiple assistive technologies that can help patients to overcome or alleviate disabilities”

Automated image segmentation has numerous clinical applications, ranging from quantitative measurement of tissue volume, through surgical planning/guidance, medical education and even cancer treatment planning. It is hoped that such advances in automated data analysis will help in the delivery of more timely care, and alleviate workforce shortages in areas such as breast cancer screening [2], where patient demand for screening already outstrips the availability of specialist breast radiologists in many parts of the world.

Applications in otolaryngology

Artificial intelligence is quickly making its way into our specialty. Both otolaryngologists and audiologists will soon be incorporating this technology into their clinical practices. Machine learning has been used to automatically classify auditory brainstem responses [8] and estimate audiometric thresholds [9]. This has allowed for accurate online testing [10], which could be used for rural and remote areas without access to standard audiometry (see the article by Dr Matthew Bromwich here).

Machine learning algorithms have also been central to the development of multiple assistive technologies that can help patients to overcome or alleviate disabilities. For example, in the context of hearing loss, significant advances in automated transcription apps, driven by machine learning algorithms, have proven particularly useful in recent months for patients who find themselves unable to lipread due to the use of face coverings to prevent the spread of COVID-19.

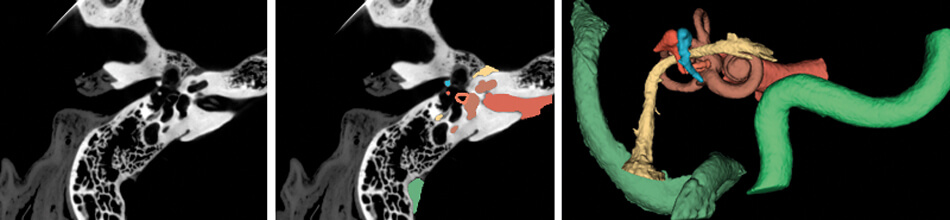

Figure 1. (Left) CT scan of the right temporal bone. (Middle) Structures of the temporal bone automatically segmented using a TensorFlow based deep learning algorithm. (Right) Three-dimensional model of the critical structures of the temporal bone to be used for surgical planning and simulation.

Images courtesy of the Auditory Biophysics Laboratory, Western University, London, Canada.

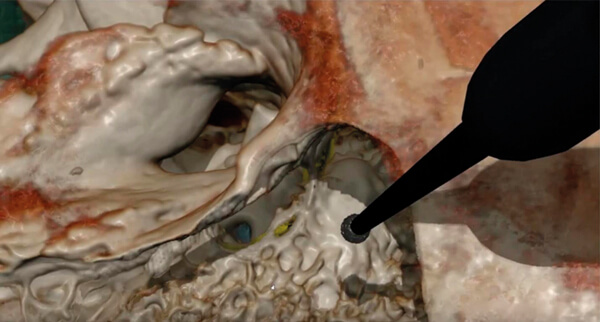

Figure 2. The virtual reality simulator CardinalSim (https://cardinalsim.stanford.edu/) depicting

a left mastoidectomy and facial recess approach. The facial nerve (yellow) and round window

(blue) were automatically delineated using deep learning techniques.

Image courtesy of the Auditory Biophysics Laboratory, Western University, London, Canada.

In addition to their role in general image classification, CNNs are likely to play a significant role in the introduction of machine learning in healthcare, especially in image-heavy specialties such as otolaryngology. For otologists, deep learning algorithms can already identify detailed temporal bone structures from CT images [3-6], segment intracochlear anatomy [7], and identify individual cochlear implant electrodes [8] (Figure 1); automatic analysis of critical structures on temporal bone scans have already facilitated patient-specific virtual reality otologic surgery [9] (Figure 2). Deep learning will likely also be critical in customised cochlear implant programming in the future.

“Automatic analysis of critical structures on temporal bone scans have already facilitated patient-specific virtual reality otologic surgery”

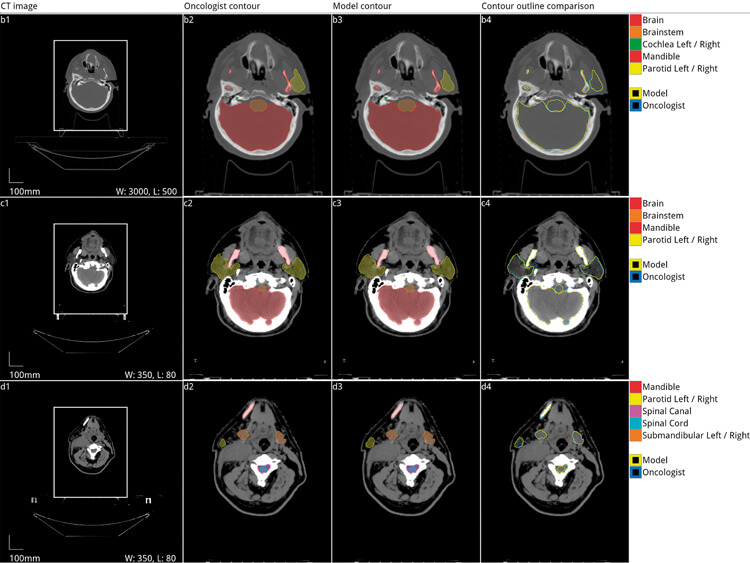

Convolutional neural networks have also been used in rhinology to automatically delineate critical anatomy and quantify sinus opacification [10-12]. Deep learning networks have been used in head and neck oncology to automatically segment anatomic structures to accelerate radiotherapy planning [13-18]. For laryngologists, voice analysis software will likely incorporate machine learning classifiers to identify pathology as it has been shown to perform better than traditional rule-based algorithms [19].

Figure 3. Automated segmentation of organs at risk of damage from radiation during radiotherapy

for head and neck cancer. Five axial slices from the scan of a 58-year-old male patient with a cancer

of the right tonsil selected from the Head-Neck Cetuximab trial dataset (patient 0522c0416) [20,21].

Adapted with permission from the original authors [13].

Conclusion

In summary, artificial intelligence and, in particular, deep learning algorithms will radically change the way we manage patients within our careers. Although developed in high-resource settings, the technology has equally significant applications in low-resource settings to facilitate quality care even in the presence of limited human resources. This and more will be explored in more detail in the scientific programme in Vancouver.

“Although developed in high-resource settings, the technology has equally significant applications in low-resource settings to facilitate quality care even in the presence of limited human resources”

References:

1. Bengio Y, Courville A, Vincent P. Representation learning: a review and new perspectives. IEEE Trans Pattern Anal Mach Intell 2013;35:1798-828.

2. McKinney SM, Sieniek M, Shetty S. International evaluation of an AI system for breast cancer screening. Nature 2020;577:89-94.

3. Heutink F, Kock V, Verbist B, et al. Multi-Scale deep learning framework for cochlea localization, segmentation and analysis on clinical ultra-high-resolution CT images. Comput Methods Programs Biomed 2020;191:105387.

4. Fauser J, Stenin I, Bauer M, et al. Toward an automatic preoperative pipeline for image-guided temporal bone surgery. Int J Comput Assist Radiol Surg 2019;14(6):967-76.

5. Zhang D, Wang J, Noble JH, et al. Deep convolutional neural networks for accurate classification and multi-landmark localization of head CTs. Med Image Anal 2020;61:101659.

6. Nikan S, van Osch K, Bartling M, et al. PWD-3DNet: A deep learning-based fully-automated segmentation of multiple structures on temporal bone CT scans. Submitted to IEEE Trans Image Process.

7. Wang J, Noble JH, Dawant BM. Metal Artifact Reduction and Intra Cochlear Anatomy Segmentation Inct Images of the Ear With A Multi-Resolution Multi-Task 3D Network. IEEE 17th International Symposium on Biomedical Imaging (ISBI) 2020;596-9.

8. Chi Y, Wang J, Zhao Y, et al. A Deep-Learning-Based Method for the Localization of Cochlear Implant Electrodes in CT Images. IEEE 16th International Symposium on Biomedical Imaging (ISBI) 2019;1141-5.

9. Compton EC, et al. Assessment of a virtual reality temporal bone surgical simulator: a national face and content validity study. J Otolaryngol Head Neck Surg 2020;49:17.

10. Laura CO, Hofmann P, Drechsler K, Wesarg S. Automatic Detection of the Nasal Cavities and Paranasal Sinuses Using Deep Neural Networks. IEEE 16th International Symposium on Biomedical Imaging (ISBI) 2019;1154-7.

11. Iwamoto Y, Xiong K, Kitamura T, et al. Automatic Segmentation of the Paranasal Sinus from Computer Tomography Images Using a Probabilistic Atlas and a Fully Convolutional Network. Conf Proc IEEE Eng Med Biol Soc 2019;2789-92.

12. Humphries SM, Centeno JP, Notary AM, et al. Volumetric assessment of paranasal sinus opacification on computed tomography can be automated using a convolutional neural network. Int Forum Allergy Rhinol 2020.

13. Nikolov S, Blackwell S, Mendes R, et al. Deep learning to achieve clinically applicable segmentation of head and neck anatomy for radiotherapy. arXiv [cs.CV] 2018.

14. Tong N, Gou S, Yang, S, et al. Fully automatic multi-organ segmentation for head and neck cancer radiotherapy using shape representation model constrained fully convolutional neural networks. Med Phys 2018;45;4558-67.

15. Ibragimov B, Xing L. Segmentation of organs-at-risks in head and neck CT images using convolutional neural networks. Med Phys 2017;44:547-57.

16. Vrtovec T, Močnik D, Strojan P, et al. B. Auto-segmentation of organs at risk for head and neck radiotherapy planning: from atlas-based to deep learning methods. Med Phys 2020.

17. Zhu W, Huang Y, Zeng L. et al. AnatomyNet: Deep learning for fast and fully automated whole-volume segmentation of head and neck anatomy. Med Phys 2019;46(2):576-89.

18. Tong N, Gou S, Yang S, et al. Shape constrained fully convolutional DenseNet with adversarial training for multiorgan segmentation on head and neck CT and low-field MR images. Med Phys 2019;46:2669-82.

19. Cesari U, De Pietro G, Marciano E, et al. Voice Disorder Detection via an m-Health System: Design and Results of a Clinical Study to Evaluate Vox4Health. Biomed Res Int 2018;8193694.

20. Bosch WR, Straube WL, Matthews JW, Purdy JA. Data From Head-Neck_Cetuximab 2015.

21. Clark K, Vendt B, Smith K, et al. The Cancer Imaging Archive (TCIA): maintaining and operating a public information repository. J Digit Imaging 2013;26:1045-5.