From Victorian stereograms to AI-generated depth maps, this lecture charts how 3D imaging is reshaping ENT anatomy – and how BACO 2026 will see it at scale.

Since the 19th century, 3D technology has experienced several resurgences in the commercial sector, yet it has never achieved widespread adoption. The initial pioneers of stereogram technology were prominent members of the Royal Society, most notable Sir Charles Wheatstone.

For years, 3D was only a form of ‘light’ entertainment which is probably best encapsulated in modern times as Fisher Price’s ‘Viewfinder’, taking the user on a 3D tour of Disneyland, the moon or even the solar system. I was excited when I purchased a copy of Gulya and Schuknecht’s Anatomy of the Temporal Bone – Second Edition whilst revising for the once-feared drilling component of the FRCS practical exam. At the back of the book were five reels of the most fantastic 3D temporal bone dissection images one could ever wish to see. It was such a shame these images were trapped into tiny cardboard reels only to be enjoyed by someone who either kept a hold of their own red Viewfinder or managed to purchase a second-hand one on eBay!

It was not until the 1980s that 3D technology truly made a significant impact in the field of surgery. The endoscope catalysed a demand for depth perception, as conventional monocular scopes only provided a single-lens perspective. Imagine having to operate in open surgery with one eye closed! A German surgeon, Dr Gerhard Buess, a pioneer in minimally invasive colorectal surgery, developed a single-surgeon binocular view endoscope.

The concept caught the attention of Boston Scientific and formed part of the early story of surgical robotics. Consequently, the race was on to create a 3D surgical robotic system. Notably, the first Da Vinci robot, ‘Leo’, employed an active 3D shutter glass system, moving away from direct viewing into the realm of displaying the image on a television system so that trainees could share the depth experience. Active 3D works by rapidly alternating images presented on a screen in sync with battery-powered glasses that black out one eye at a time. This rapid flickering is not to everyone’s liking and generally darkens the projected image. It was years later that the company transitioned to the stereoscopic HD video viewer that is widely recognised today as being a comfortable, clear and bright 3D representation of anatomy from the twin camera endoscope.

In the modern era, most companies that have developed high-quality 3D digital endoscopes are predominantly utilising passive 3D systems. These systems employ polarised light to present distinct images from alternate pixel lines to each eye at the same time, enabling the merging of the two images into a single-depth representation by the brain using simple polarised glass lenses. In surgical laparoscopic procedures, this approach proves highly effective and beneficial. However, constant unpredictable movement and changes in horizon during live surgery can make viewing 3D challenging and potentially uncomfortable for extended periods. This may be the reason why the very capable standalone 3D endoscope never quite captured the imagination of surgeons until it was mounted onto a surgical robot arm where horizon stabilisation was achieved. Undoubtedly, the images provided by these systems offer a significantly superior view compared to those offered by monocular scopes, as well as harnessing digital light technologies to view changes beyond the capabilities of white light alone.

In the advent of artificial intelligence, we have witnessed a remarkable advancement in the realm of image quality. The consumer market now expects 4K resolution as an absolute minimum standard, with high frame refresh rates reducing motion blur artefact. Real-time image upscaling, previously a formidable challenge in 3D systems, has become a reality in surgical viewing systems. This capability is enabled by machine learning methods, which analyse a single image and discern apparent shadows and contour variations to generate a greyscale depth map. These depth maps provide additional data that facilitates the instantaneous reproduction of a duplicate image from the original, albeit from a different perspective.

As computational speed continues to increase, we are entering an era where the generation of 3D images may no longer necessitate the use of dedicated 3D cameras. Apple has introduced this technology into their latest Vision Pro headset that can instantly convert any 2D images into a 3D picture. Taking this machine-learning method further, developers can do the same with recorded video. It will only be a matter of time before this can be incorporated into live video. There will come a time when any 2D digital endoscope image can be coded into a live 3D view at the touch of a button.

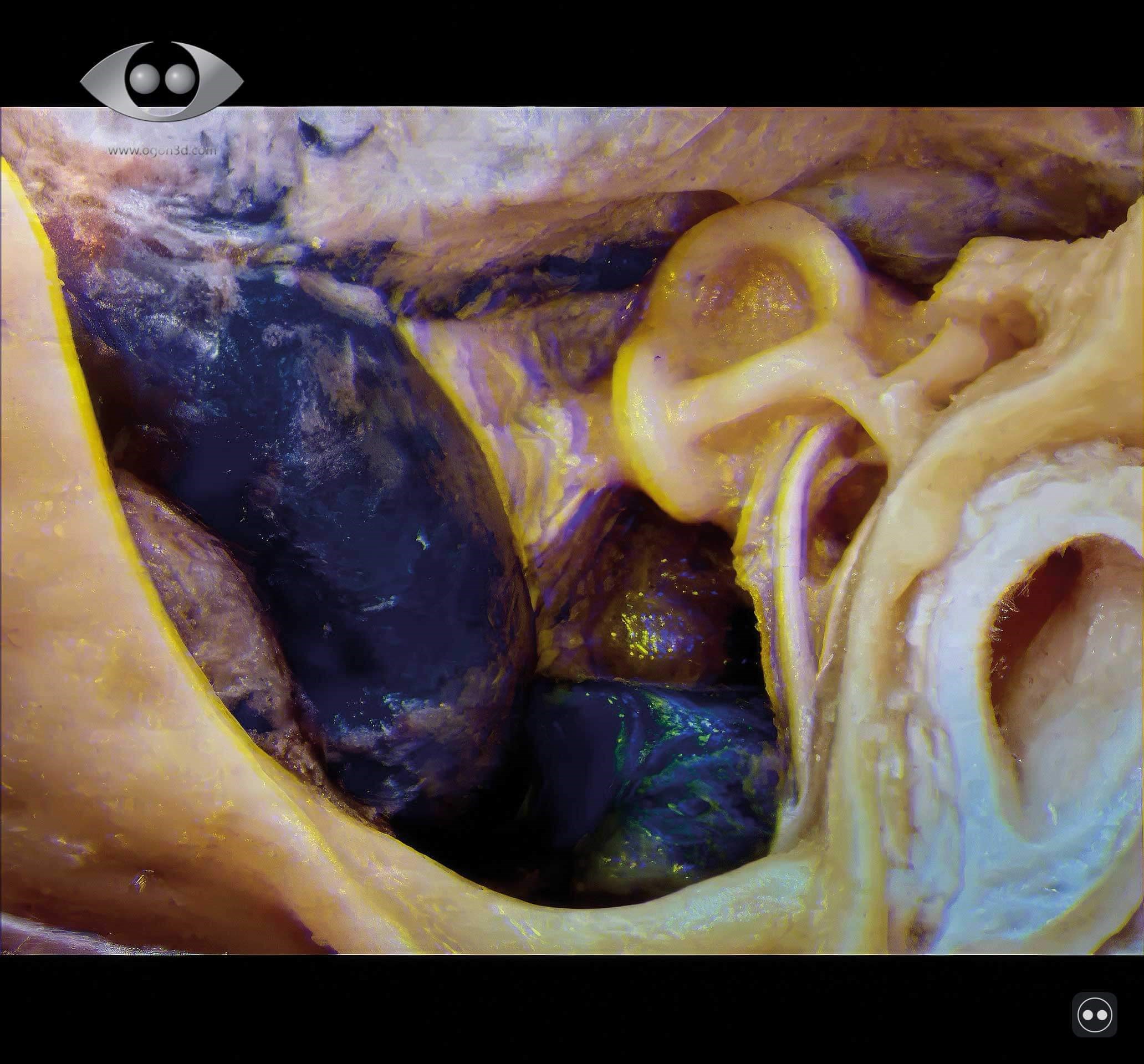

Beyond capturing and storing 3D images, the next challenge involved disseminating this material widely to the benefit of the medical and scientific community. In July 2026, I am honoured to be delivering the Lionel Colledge Lecture. Colledge, a pioneering figure in ENT surgery within my own field of head and neck, was a key figure in the development of laryngectomy. He published on his operative techniques as well as the pathologies managed. At BACO 2026, I intend to present 3D images on a mass scale. These images will be produced by novel machine-learning methods in combination with the reinvigoration of historic techniques that, with advancements in computing, have found renewed relevance in the medical field. The objective is to illustrate the profound shift in our understanding of intricate anatomical spaces, including the cavernous sinus, parapharyngeal space, infratemporal fossa, pterygopalatine fossa, lateral nasal wall and middle-ear medial wall.

Figure 1: Infitec polarised lenses.

Presenting 3D content to a large audience poses significant scientific challenges with the current available technologies. Infitec, a German-based company, has developed bespoke light filtering technology that eliminates the need for the silver screen (Figure 1). This advancement could facilitate large-scale 3D presentations to audiences on any white surface. Nevertheless, the initial cost of an event like BACO would have exceeded the budget allocated for the College Lecture.

Consequently, I faced a difficult decision: to use anaglyph, red-cyan or to forgo any 3D images in a lecture celebrating the benefits of 3D imaging in ENT anatomy. Fortunately, I came across a captivating news story about a Swedish company, ColorCode 3D, which presented all the advertisements of the US halftime Super Bowl show to over one million people on their own televisions in 2009. An image of the Obamas watching the event wearing specially designed amber-blue versions of paper 3D glasses, particularly resonated with me (Figure 2a).

Figure 2a: ColorCode 3D broadcast across the USA for the half-time Superbowl adverts.

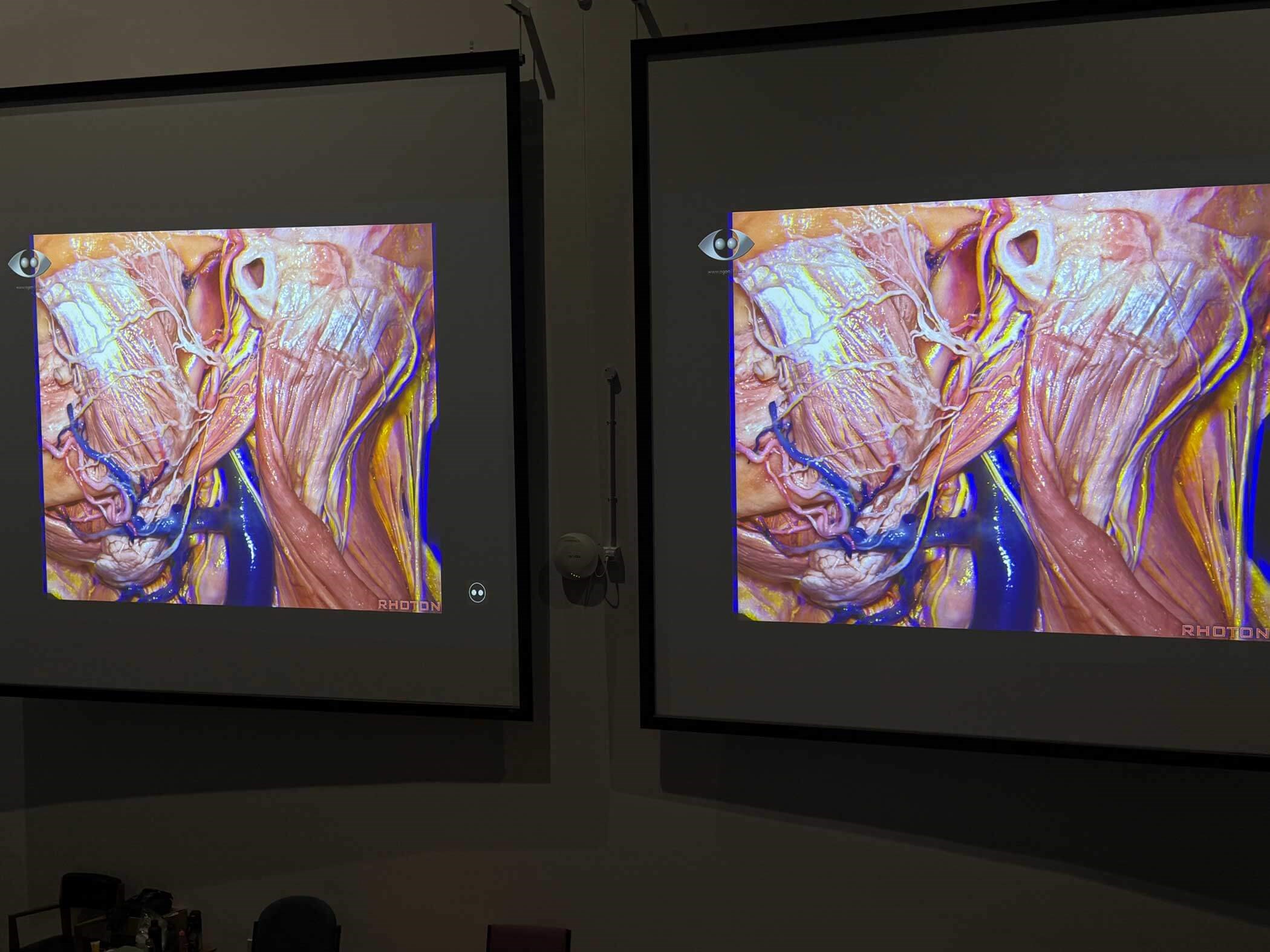

Realising that this technology was suitable for the President of the United States, I believed it would be impressive to my fellow ENT colleagues for BACO 2026 (Figure 2b).

Figure 2b: A ColorCode image projected to a large audience in a lecture theatre.

I eagerly anticipate explaining not only the rationale behind 3D imaging but also the practical implementation of this technology, incorporating examples that fuse 3D imaging, artificial intelligence and a touch of imagination (Figure 2c).

Figure 2c: Machine-learning-enhanced ColorCode projection of modern 3D Ogon techniques at BACO 2026.

I am also looking forward to the opportunity to connect with many of you at a long-awaited meeting, which consistently ranks as a highlight of my calendar.

Ajith George will deliver the Lionel Colledge Lecture at BACO 2026 in Glasgow, UK, in July.

For further information visit: www.entuk.org/baco

Declaration of competing interests: AG is the Medical Director for endoscope-i Ltd.