In 2069 will we look forward to being enslaved by robots, becoming zombies or having our health (and ill health) diagnosed by nanotech? Ajith George muses over what the future holds for us all.

The future of healthcare, not just otolaryngology, in the next 15 years is likely to be robotics, gene and immunotherapy, apps, telemedicine remote diagnosis, artificial intelligence (AI) and nanotechnology. Excited? Certainly, it’s hard to curb the enthusiasm of most of us in ENT but should we proceed with caution?

Figure 1. Concept image rendered by the author of a nanotechnology smart monitor

for a popular topic nasal spray to assess patient compliance with medication.

2034

The aforementioned technologies will shape how we monitor and diagnose patients. I see the potential to synthesise a nanotech monitor into a ‘smart pill’ aerosol providing data assessing the compliance of a patient’s use of their topical steroid (see Figure 1).

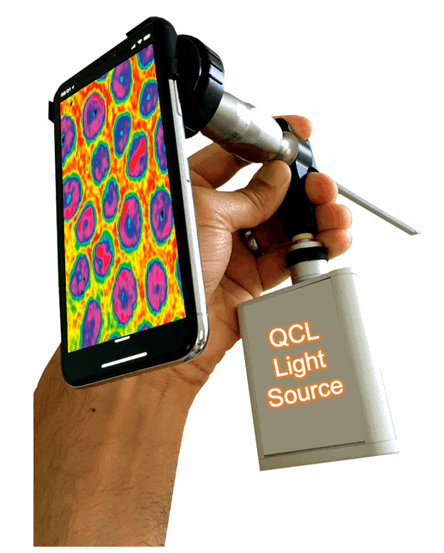

Or perhaps the realisation that the properties of light, such as infrared quantum cascade LASERs (QCL) and spectral algorithms, being used for ‘light diagnostics’ during outpatients laryngoscopy in early cancer detection. For the latter, huge amounts of data will need analysing by a portable computer fast enough to process multiple permutations into an intelligible answer. This is the next step in endoscopic mobile technology (see Figure 2).

Figure 2. Concept image rendered by the author of a miniaturised portable Quantum Cascade

LASER for diagnosing cancer in the larynx when attached to a smart phone endoscope.

The last 15 years have witnessed unprecedented technological advancements borne out of the digital era. However, flip that round to medicine and it seems unfortunate that this dramatic change has not been replicated. Most mobile devices are still frowned upon in healthcare, appointments are still allocated at specific times during the ‘normal working day’ and seeking up-to-date evidence-based information either involves trawling through the masses of literature online of varying quality or, if you are fortunate, reading the systematic review from the person who has done that work already.

I think many of us would like to see medicine and indeed otolaryngology evolve at the same pace as domestic technology. There are many examples of technology we take for granted at home that we cannot reciprocate in healthcare for some strange reason but there are signs of change. The NHS appears to be overcoming the ‘ban mobile devices’ dogma but is faced with the ever-increasing problem of cost. New tech costs money and, in particular, technology in healthcare has a bad reputation for not working. Let us hope those two barriers improve in the next 15 years and to make that happen, ENT clinicians are integral to the change we want to see in our speciality. So, if we envisage using ‘Alexa’s’ or ‘Hey Google’s’ AI diagnostic algorithms to support primary caregivers with the most up-to-date high-level evidence of managing common ENT conditions and we want to see telemedicine as the new secondary care referral system to reduce conventional outpatient appointments, then is it not up to us to create it?

“Will the machines become so good at diagnosing that there’s simply no need for a doctor to make this decision?”

Our speciality has a strong track record for wearables: 1895 witnessed the development of the very first ‘wearable’ that we all take for granted - the hearing aid! In fact, it’s so successful we don’t really see it as an innovation and that marks its success. The new technologies that we use in the next 15 years that aren’t talked about and just ‘work’ are most likely going to be our greatest success, borne out of need rather than the desire to develop. As for smartpatches, smartpills and stockpiling mounds of data on your smartphone collated by Apple Watch or Fitbit, it certainly captures our technical imagination as ENT clinicians but it’s doubtful whether it is going to really help us day to day. Physical space in healthcare comes at a significant cost. ‘Virtual’ referrals occupy space in the cloud. The NHS must invest heavily into UK-based Health Cloud Systems to manage NHS data. APIs (Application Programming Interface) can be created compatible with differing native software to access this data, thus creating the long-awaited shared care record. Patients can get a consultant opinion at any time of the day and not have to wait six weeks, or often more, to get this advice. ENT pathology fits this model perfectly given how the ear nose and throat are examined using endoscopes and camera systems capable of recording.

50 years – 2069

The inexorable rate at which healthcare technology is developing could potentially lead to unforeseen problems. The truth is, we have no idea what the next 50 years will bring but the rate of change will be considerably more rapid than the previous half century. In 1970 the futurist and author, Alvin Toffler, coined the term ‘future shock’ and his eponymous book warns of a public disconnect due to technology moving too fast to comprehend [1]. Are we in danger of ‘future shocking’ clinicians? Toffler believed that for society to progress it was not inventions, products or technologies that should advance rapidly but societies’ ideals. He proposed that mankind’s key to a better future was based around us taking care of the elderly, showing more compassion and honesty, and employing more healthcare workers, particularly with emotional and affectional skills. Toffler could not have envisaged technological advancements in AI where robots start to learn, have emotions and potentially look after elderly people living on their own.

Medicine in 2069 will employ a greater use of AI to enable us to make faster evidenced based ‘rational’ decisions. This poses some interesting philosophical dilemmas. Take, for example, the ‘self-driving car’, heralded as the pinnacle or artificial intelligence until the reports of deaths caused by crashes [2]. Are we as humans willing to accept the decisions made by artificial intelligence, a system based on logic and algorithms, supposedly the mathematically correct and best decision at that time even if it results in a death?

Then there’s the issue of the effect of the use of AI on clinicians. Psychologist, Herbert Gerjuoy, is famously quoted as saying, “tomorrow’s illiterate will not be the man who cannot read; he will be the man who has not learned how to learn”. Will the machines become so good at diagnosing that there’s simply no need for a doctor to make this decision? Even more concerning is when the machines start to think for themselves. It’s the stuff of science fiction. ‘Skynet’ became self-aware in 1997, sparking in the Terminator franchise where machines went to war on humans, and in Will Smith’s i,Robot, the machines realised, in 2035 ,the best way to protect humans was to enslave them! On a similar theme, are we to fear the negative impact of gene therapy portrayed in Fox’s The Walking Dead, where experiments to protect humans from life-threatening disease resulted in the creation of flesh hungry zombies!

Figure 3. Dialogue created by Facebook’s AI chatbots before switching off.

Back to the real world, there are early signs of science fiction. In 2017, Facebook had to abandon an experiment after two AI programmes they created started communicating to each other autonomously in a strange language only the computers understood (see Figure 3) [3]. Their aim was to create ‘chatbots’ that were capable holding an independent perpetuating conversation. However, when the machines realised that the English Language was too inefficient to communicate quickly, they started to create their own language and that’s when Facebook decided to ‘terminate’ the programme. Meanwhile, European countries have developed dedicated lanes and traffic light systems for ‘smombies’, a smart phone zombie so focused on their personal device that they are oblivious of the world around them (www.bbc.co.uk/news/technology-38992653).

Conclusion

The future of diagnosis and monitoring will certainly see a focus on ‘WellCare’ – maintaining wellness – as opposed to healthcare – managing illness. It is better to be preventative than reactive but currently we seem to be in the proactive stage. The products we need to develop to become preventative will need to be cost-efficient and developed by those who use them to make them purposeful and encourage a fundamental principle of being human; to care.

References

1. Toffler A. Future Shock. New York, USA; Bantam Books Inc; 1971.

2. Stewart J. Tesla’s autopilot was involved in another deadly car crash. Wired.

www.wired.com/story/

tesla-autopilot-self-driving

-crash-california

Last accessed January 2019.

3. Griffin A. Facebook’s artificial intelligence robots shut down after they start talking to each other in their own language. Independent.

www.independent.co.uk/

life-style/gadgets-and-tech/

news/facebook-artificial-intelligence

-ai-chatbot-new-language

-research-openai-google-a7869706.html

Last accessed January 2019.

Declaration of Competing Interests: AG is the Medical Director for endoscope-i Ltd, an innovation SME specialising in endoscopic mobile imaging.